Students Need Reliable Success Data From Feds, Not Media

With a headline that looks as if it were taken from The Onion, a recent U.S. News & World Report analysis on colleges and universities that succeed at graduating higher-income students lacks important context, and frankly, ignores one of the inherent missions of our postsecondary system: to provide the education and supports necessary for all students to graduate.

At a time when national conversations center on college access, and in particular, success, for low-income students (see last month’s White House summit on college opportunity, for example), why would U.S. News focus an analysis on the success of wealthy students? Low-income students today are still not enrolling in college at the same rate higher income students did 40 years ago. In fact, students from high-income families are 7 times more likely than low-income students to hold a bachelor’s degree by the age of 24.

If our country is to close those opportunity and success gaps, we need to start with better and more complete data from all institutions of higher education — data that can only be collected by the federal government. Otherwise, students and families will be forced to depend on media sources like U.S. News that only collect data voluntarily submitted by institutions and then risk carrying out flawed analyses.

To their credit, we applaud U.S. News for attempting to fill the gap that the government has left open. But by focusing an analysis strictly on the success of wealthy students, U.S. News failed to ask the glaring, vastly more important policy question: How well are individual colleges doing at graduating low-income students as compared to upper-income peers attending the same institution?

A few months ago, the news publication tried to answer that important question but in an inaccurate and misleading way. Instead of comparing success rates across different student subgroups, it chose to compare them between low-income students and all students. That’s an inaccurate comparison as low-income students are a subset of all students.

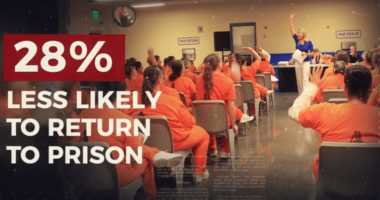

When we pull together U.S. News’ series of articles on individual college performance based on socioeconomic status (high-income, middle-income, low-income), we see that the trends are typically a lot more dramatic than what the publication reports. (That’s because an average graduation rate for all students will be deflated when all students are included, whereas graduation rates for low-income or high-income students separately will be much higher.) In a few cases, we even see that some schools U.S. News reported to be over-performing (meaning they graduate more low-income students than all students) were actually underperforming when comparing graduation rates of low-income students with those of their higher-income peers. For example:

Moreover, labeling colleges that graduate more low-income students than all students as “over-performing” is misleading and unhelpful. Even when we provide the correct comparison group for these institutions, what U.S. News failed to contextualize for students is that success for one student group should not come at the expense of another.

These examples show the danger in presenting partial or incomplete data when trying to depict examples of institutional performance with students from different socioeconomic backgrounds. While some data can be better than no data, they can only be helpful to consumers when they are presented appropriately: with the proper comparison point and the proper interpretation.

U.S. News’ data, however, will always be non-representative of larger trends so long as institutions are not required to report them to the federal government. In order to get a complete understanding of institutional performance with students from different socioeconomic backgrounds, and to move the needle on low-income access and success, the federal government must require institutions to report this data immediately and make it easily accessible.

Information, after all, is power.